How Search Engines Find Your Blog: A Beginner’s Guide

Learn the basics of crawling and indexing and discover practical steps you can take to help search engines discover, understand, and rank your blog posts.

The Two Steps to Getting Found on Search Engines

Billions of blog posts are published every year, yet only a small fraction ever appear on Google. That feeling of hitting "publish" and wondering if anyone will find your work is common. The journey from your screen to a search result page begins with two fundamental processes: crawling and indexing.

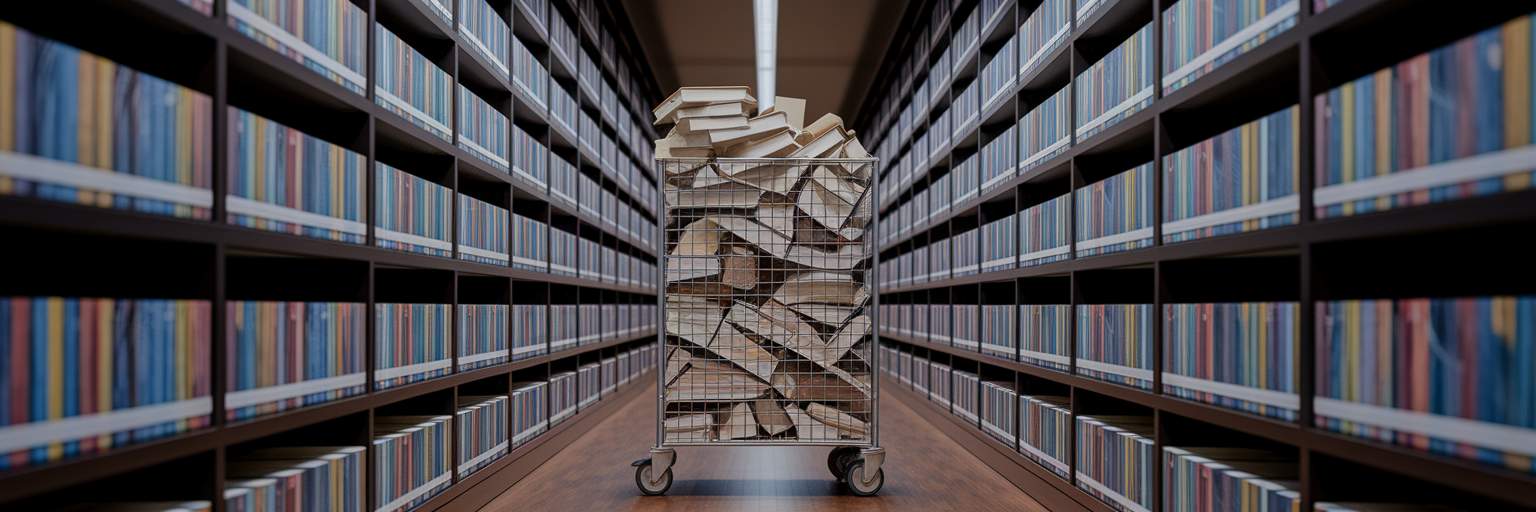

Think of a search engine as a librarian building a massive, global library. First, the librarian must discover new books. This discovery phase is crawling. Automated programs, often called "spiders" or "bots," travel across the internet, following links from page to page to find new and updated content. If a crawler never finds your blog post, it’s like a book that never makes it to the library. It remains invisible.

Once the librarian finds a book, the next step is to add it to the card catalog so people can find it. This organization phase is indexing. After crawling your page, the search engine analyzes its content—the text, images, and headings—and stores this information in a gigantic database. This database is the catalog from which all search results are pulled. Understanding what is crawling and indexing is the first step, because as industry resources like Moz explain, a blog can never appear in organic listings without passing through both stages. Your content must be found, and then it must be understood and categorized.

How Search Bots Discover Your Content

So, how do these automated search bots actually find your blog in the first place? Their main job is to systematically browse the web and discover URLs. This process explains how search engines work at their most basic level: they follow paths to find information. They primarily do this in a few key ways.

Following the Trail of Links

The most common way crawlers discover content is by following hyperlinks. When a bot crawls a page it already knows about, it looks for links to pages it hasn't seen before. This is why internal links (linking between your own posts) and backlinks (links from other websites to yours) are so important. Each link is a new pathway for a crawler to find your content.

Your Blog's Personal Roadmap: The XML Sitemap

Instead of waiting for crawlers to stumble upon your pages, you can hand them a map directly. An XML sitemap is a file you create that lists all the important URLs on your blog. Submitting this sitemap to search engines is like giving the librarian a complete inventory of your books, ensuring they don't miss anything important in the stacks.

Setting Boundaries with robots.txt

You can also tell crawlers where not to go. A `robots.txt` file is a simple text file on your site that gives instructions to bots. You might use it to block them from crawling duplicate pages, private admin areas, or thank you pages. It helps them focus their limited time on the content you actually want people to find. This is part of a wider trend in automated content analysis, where systems are becoming better at processing complex digital information to organize the web more effectively.

Turning Your Blog Posts into Searchable Information

Once a crawler has successfully discovered and accessed your blog post, the indexing process begins. This is where the search engine moves from just finding your page to actually understanding it. The system renders your page, processing the code to see the content and layout just as a human visitor would. It then parses this information, breaking it down into signals it can categorize.

During indexing, the search engine extracts and analyzes several key elements to understand what your post is about:

- Textual Content: The most obvious element is the words on the page. The system analyzes the topics, keywords, and overall meaning of your writing.

- Media Context: Content is more than just text. The search engine looks at information like image alt text and video descriptions to understand the context of your media.

- Structural Signals: Your page’s structure provides important clues. Headings (H1, H2), title tags, and other HTML elements signal which parts of your content are most important.

All this analyzed information is then stored in the index, a massive database that contains information on hundreds of trillions of web pages. Once your post is in this index, it becomes a candidate to appear in search results for relevant queries. This is the critical step that makes your content searchable. If this process fails, you can run into google indexing issues where your content exists but can't be found by users.

Common Roadblocks That Hide Your Blog

Sometimes, you do everything right, but your blog still struggles to appear in search results. This often happens because of technical roadblocks that prevent crawling or indexing. Search engines allocate a finite amount of resources, or a "crawl budget," to any given website. If your site has problems, you can easily exhaust this budget before your most important posts are even found.

These issues waste a crawler's time, create dead ends, and signal to search engines that your site may be low quality, which can cause them to visit less frequently. While fresh posts on a healthy site can be indexed quickly, research from Backlinko indicates that the median time can stretch to 72 hours or more for sites with technical problems. Staying on top of these technical aspects is a key part of any modern content strategy, and you can read about other blogging trends successful creators are using to get ahead. Here are the most common roadblocks that can hurt your efforts to improve blog visibility.

| Roadblock | Why It Hides Your Blog | How You Can Check It |

|---|---|---|

| Slow Page Speed | Crawlers may give up before your page fully loads, wasting your crawl budget. | Use Google's PageSpeed Insights tool for a free report. |

| Broken Links (404s) | These create dead ends for crawlers, preventing them from discovering other pages. | Check the 'Coverage' report in Google Search Console for errors. |

| Duplicate Content | Signals to search engines that your site offers low value, reducing how often they crawl it. | Manually review your posts for significant overlap or use an online plagiarism checker. |

| Blocked by robots.txt | You might be accidentally telling crawlers not to visit important pages. | Review your `robots.txt` file (e.g., yoursite.com/robots.txt) for 'Disallow' rules. |

Practical Steps to Speed Up Discovery

Knowing the problems is one thing, but fixing them is what gets results. You can take several practical steps to remove roadblocks and help search engines find and index your content more efficiently. These actions are your direct line of communication with search engines, giving you more control over your blog's visibility.

- Create and Submit an XML Sitemap: As mentioned earlier, a sitemap is a map of your blog. Most blogging platforms, like WordPress, can automatically generate one for you with a simple plugin. Once you have it, you can submit blog to search engines directly through a free tool like Google Search Console. This is the most direct way to tell Google about all the pages you want it to crawl.

- Use Google Search Console: This free tool is essential for any blogger. Think of it as your blog's health dashboard. It allows you to monitor your site's indexing status, see if crawlers are running into errors, and check for security issues. You can even use its "URL Inspection" tool to request indexing for a specific new post, which can speed up the discovery process.

- Add Structured Data (Schema Markup): This may sound technical, but the concept is simple. Structured data is a standardized format for providing information about a page and classifying its content. It’s like adding labels to your blog post that say, "This is an article," "The author is Jane Doe," or "This is a recipe with a 25-minute cook time." This helps search engines understand your content instantly and can even qualify your posts for special features in search results, like review stars or FAQ dropdowns.

As you implement these steps, you can find more guides and resources to help grow your audience on our main blog. These actions are not just theoretical; they lead to tangible results by helping your blog get indexed faster and more reliably.

Your Quick-Start Checklist for Visibility

Getting started doesn't have to be complicated. If you want to get my blog on Google and ensure your hard work gets seen, focus on these essential maintenance tasks. This checklist covers the most critical actions you can take right now to improve your blog's health.

- Check Your Site Speed: Use a free tool like Google's PageSpeed Insights to test your loading time. A faster site is friendlier to both users and crawlers.

- Fix Broken Links: Regularly use Google Search Console to find and fix any links that lead to "404 not found" error pages. Each broken link is a dead end for a crawler.

- Submit Your Sitemap: Make sure you have an XML sitemap and have submitted it via Google Search Console. This gives search engines a clear and updated map of your content.

- Monitor for Errors: Get into the habit of checking the "Coverage" report in Google Search Console. It will tell you exactly which pages have indexing problems and why.

Think of these actions not as a one-time fix but as ongoing maintenance. Just like tending to a garden, consistently caring for your blog's technical health ensures it remains visible and accessible to search engines and, most importantly, to your future readers.